The dominant story about AI is that it is coming for jobs and that AGI is around the corner. Both narratives assume a kind of plug-and-play model of value: a sufficiently capable system arrives, work that used to require people stops requiring people, and the value transfers from labour to capital almost mechanically.

The data on what AI companies are actually hiring for tells a different story.

Across the 9,758 active jobs at the 121 AI companies we track, 1,320 — 13.5% — are dedicated to closing the gap between an AI product and a customer's environment. Solutions Architects (472), Solutions Engineers (249), Customer Success Managers (199), Forward Deployed Engineers (165), Engagement Managers (73), Client Partners (70), Field Engineering Management (67), Implementation Specialists (25). The category is roughly half the size of the entire engineering function at these companies (2,697 jobs), and 73% of companies in the dataset are hiring at least one role of this kind. We documented the pattern in The Deployment Gap; it has only deepened, and it is one of the clearer signals visible in the current talent meta.

On 4 May 2026, within hours of each other, the two largest AI companies in the world announced that they were standing up consultancies. OpenAI launched The Deployment Company — a $10 billion joint venture with TPG, Brookfield, Bain, SoftBank and Dragoneer, capitalised at over $4 billion. Anthropic launched a $1.5 billion services firm with Goldman Sachs, Blackstone, and Hellman & Friedman. Both ventures embed engineers from the AI labs directly inside customer organisations to build, customise, and run AI systems hands-on. As Goldman's Marc Nachmann put it: "Having the model alone doesn't change your workflows or how you operate. You need people who can combine the technology with what's actually happening in the business." The internal hiring foreshadowed the announcements. OpenAI has 82 deployment-services roles open today across titles including Forward Deployed Engineer, AI Deployment Engineer, and Technical Deployment Lead. Anthropic has 51 across Applied AI Engineer and Applied AI Architect.

In the application layer, role titles are appearing that look like category errors. Harvey, the legal-AI company, has 22 active "Legal Engineer" jobs. Legora, its Swedish challenger, has 18. The job descriptions require a JD and at least three years practising law at a top-tier firm. They are lawyers — paid like lawyers, recruited from Vault-50 firms — given a title that contains the word "engineer" because their job is to redesign legal work for an AI system. xAI is hiring 101 active "Tutors" — Physics Tutor, Pure Math Tutor, Model Behavior Tutor for Epistemic Rigor, AI Tutor in Bengali and Vietnamese and Hebrew. These are domain experts hired to encode their domain into the model.

If AI were really plug-and-play, none of this would be necessary. You would expect the AI companies that are scaling fastest to look like cloud businesses — heavy on research and engineering, light on services. You would not expect them to be standing up four-billion-dollar consulting arms, hiring entire law firms, or paying physicists to teach physics to models.

The conclusion the data forces is the opposite of the rhetoric. The bottleneck for enterprise AI is not the model. The bottleneck is everything that has to happen between the model and the work — and there is a lot of it. What follows is a mental model for why.

A framework from 1965

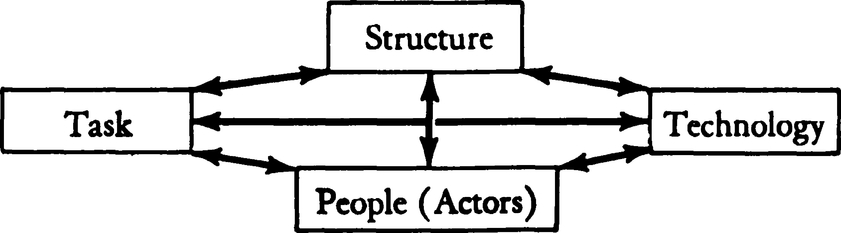

In a chapter published in 1965 in the Handbook of Organizations, an American psychologist of management named Harold Leavitt proposed that any industrial organisation could be understood as a system of four interacting variables: task, structure, technology, and people. He drew them as a diamond, connected by arrowheads in every direction, and argued that no one variable could be moved without compensatory changes in the others. Decentralise the structure and you change the technology, the tasks, and the people. Introduce a new technology and you change everything else.

Fig. 1 from Leavitt's chapter, in which the fourth corner is labelled "People (Actors)".

Fig. 1 from Leavitt's chapter, in which the fourth corner is labelled "People (Actors)".

The diagram is precise in a way that became important later. Leavitt did not label the fourth corner "people". He labelled it "People (Actors)" — and in the text, he qualified the term carefully: "Actors refers chiefly to people, but with the qualification that acts executed by people at some time or place need not remain exclusively in the human domain." It is a single sentence, written sixty years before any of this, anticipating a future where the actor inside an organisational system might not be a person.

By the 1990s, descendants of Leavitt's diamond had been simplified into a triangle — People, Process, Technology — popularised in different forms by Bruce Schneier and others, and adopted as the operating model for IT departments, consulting practices, and change programmes. Three elements, one mantra. PPT assumed a clear sequence. Companies hired people, those people performed a process that created value, and technology was what they used to do it faster and better. In the simplification, two things were lost: the distinction between task and structure, which Leavitt had kept separate, and the qualification about actors. PPT spoke about people, full stop.

For most of the last sixty years that simplification was harmless. People sat at one end. Technology sat at the other. Process was the connective tissue between them. The arrow of value pointed in one direction, and Leavitt's footnote about actors was a curiosity.

The arrow has now closed into a loop, and the footnote has stopped being a footnote.

People codify processes. Processes get encoded into technology. And technology, increasingly, performs the work that people used to do. The agent — the AI system asked to handle a task end-to-end — sits at the point where the loop closes. It is no longer simply a tool. It is becoming what Leavitt had the foresight to call an actor inside the system: an entity that performs acts, regardless of whether those acts originate in the human domain.

The framework still works. But the centre of gravity shifts. The interesting question stops being who do we hire and becomes what is the method we are encoding. Process — the middle element of the triangle, the place where Leavitt's careful distinction between task and structure was lost — turns out to be the place where everything is decided.

This is where the deployment hiring is coming from. And it is also where the difficulty of scaling AI inside an organisation actually lives.

The method that is invisible until you have to write it down

When a company hires a senior recruiter, or a tax accountant, or a litigator, it is not really hiring a generic skill. It is hiring someone who has practised a method — the specific way that kind of work gets done in that kind of environment. The method has two parts. The first is the general pattern, learned through training and prior jobs. The second is the local adaptation — which channels matter at this company, which signals are credible to this team, what the bar looks like here, how this hiring manager actually decides. The second part is usually invisible. New hires absorb it over weeks and months. Companies treat the absorption period as a fixed cost of hiring, because there is no alternative.

When the actor in the system is a person, this works because people pattern-match. A litigator joining a new firm doesn't need a written manual; she watches two partners argue a motion and the local method clicks into place. The method is encoded in tacit signals — body language, what gets praised, what gets quietly redrafted — and humans are evolved to extract method from social context.

When the actor in the system is an agent, it doesn't work, because the agent cannot pattern-match its way into the local method. It can pattern-match from training. It cannot tailor without being shown how. Someone has to extract the method, write it down, give the agent the right context at the right step, evaluate whether the output is good, and iterate. The cost that used to be absorbed silently inside the onboarding period now has to be paid up front, in legible artefacts, before the agent does its first hour of useful work.

This is the method gap.

It is worth being explicit about what method contains, because the word does a lot of work in this argument. A method is not a single thing. It is a layered set of artefacts: the practices that distinguish good work from poor work, the conventions of a particular environment, the judgments that experienced operators make almost without thinking, the evaluations that determine whether an output is acceptable, the escalation paths when the work goes wrong. Some of these are written down in standard operating procedures, runbooks, and style guides. Most are not. They live in code review comments, in the way a partner redrafts a junior's memo, in the questions a senior nurse asks a junior nurse on handover, in the tacit "we don't do it that way here" that absorbs new hires into a culture. The method gap is the gap between this layered, mostly-tacit set of artefacts and an agent's ability to perform against any of it.

It explains the deployment hiring. The 1,320 jobs in the dataset are doing one of three things, and all three are method-extraction work. Solutions Architects and Solutions Engineers translate the customer's existing process into something the AI product can run against. Forward Deployed Engineers embed inside the customer's operations to learn the local method directly, then encode it. Customer Success Managers and Engagement Managers run the iterative loop that catches everywhere the method was tacit, surfacing it so the agent can be retrained against it. The role of "deployment" in an AI company is not really sales or support. It is the function whose job is to extract method from a customer's environment and translate it into something an AI system can execute against.

It explains the consulting JVs. The bottleneck the May 4 announcements are trying to break is not engineering capacity. It is the scarcity of people who can sit inside a mid-market PE-owned company, understand how the finance close actually runs or how claims actually get adjudicated, and turn that understanding into a working agent. Management consultants have always been valuable for the same reason: their accumulated knowledge of how a function should run is, itself, codified method. The AI labs are not disrupting consulting; they are absorbing the parts of it that matter most for getting models to work, and rewiring the economics around it.

It explains the Legal Engineers. The gap between "an AI that can pass the bar" and "an AI that can be used by a partner at a Magic Circle firm to draft a credit agreement" is not a gap in model capability. It is a gap in method. It lives in the dozens of small, contextual decisions that distinguish a junior associate's draft from a partner's draft, in the conventions of a specific practice area, in the way a particular deal is actually run. Closing that gap requires someone who has done the work and can write down how it is done. Harvey could have hired more software engineers. It is hiring lawyers because lawyers are the ones who carry the method.

And it explains the tutors. xAI's 101 Tutor roles are domain experts hired to encode disciplinary method directly into the model — the standards of physics, the conventions of mathematical proof, the cadences of conversation in Bengali. Where Harvey embeds method at the application layer through Legal Engineers, xAI embeds method at the model layer through Tutors. The mechanism is different. The activity is the same.

Why the new vocabulary suddenly makes sense

The clearest evidence that the method gap is real is the vocabulary the AI industry has organised itself around in the last eighteen months.

Andrej Karpathy, in June 2025, popularised the term context engineering — "the delicate art and science of filling the context window with just the right information for the next step." Tobias Lütke described it as "the art of providing all the context for the task to be plausibly solvable by the LLM." The term displaced prompt engineering almost overnight in serious LLM applications, because prompting implied a clever instruction and context engineering implied something larger: assembling, at runtime, the specific information an agent needs to perform a step well.

Around the same time, evals moved from a niche ML-research term into a standard part of the production-AI vocabulary — frameworks for checking, repeatedly and at scale, whether an agent is doing the work correctly. Harnesses described the scaffolding that surrounds an agent: the tool calls, the memory, the control flow, the retries. MCPs — Anthropic's Model Context Protocol — gave agents a clean, structured interface to software whose method was already encoded. Anthropic's own Applied AI Engineer postings list the required production experience as: "prompting, context engineering, agent architectures, evaluation frameworks, and deployment at scale."

These are not five separate technical practices. They are five names for parts of the same activity: making implicit method explicit so an agent can perform against it.

They are also, almost line-for-line, what you would have done to get a new human hire productive. The runbook is the harness. The dashboards are the context. The "this is what good looks like" review is the eval. The org chart and the escalation paths are the agent architecture. The reason the human equivalent was usually informal is that humans tolerate informality. Agents do not. So the work has to be written down.

This also explains why the agent layer arrived first in software engineering. It was not because engineers were uniquely automatable. It was because the technology of software engineering is itself a method-rich environment. Version control encodes a discipline of tracking change. CI/CD encodes a discipline of testing before deploying. Linters encode style rules. Code review encodes distributed verification. Decades of method had already been codified, deliberately, into the tooling. An agent dropped into that environment inherits all of it for free. The same agent placed inside, say, a hospital's discharge process — where method lives in the heads of nurses, where rules vary by ward, where documentation is partial, where feedback loops are days long — is no less capable. The environment is far less codified. The agent runs into the method gap and stalls.

Cursor, Codex, Claude Code did not work because models suddenly got better at code. They worked because code was the place where the method had been written down already.

What follows from this

A few things follow from the framework that the data will keep testing.

The first is that the bottleneck for enterprise AI is no longer model capability. Every senior person at every major lab has been saying some version of this for a year, and the May 4 announcements were the most public confirmation of it. The bottleneck is the scarcity of people who can extract the method from a real organisation and encode it in a way an agent can run against. This is why both labs spent the equivalent of a small acquisition standing up firms whose only job is to do that work.

The second is that the premium is shifting from generic skill to applied method. A generalist engineer is less rare than they were eighteen months ago. A generalist engineer who can implement an agent inside a regulated workflow is among the most expensive hires in the market. A senior associate at a top law firm earns, in their existing job, more than most software engineers; Harvey is hiring those associates as Legal Engineers because that combination of legal method and product instinct is what makes the product work. The same recomposition is showing up in finance, in healthcare, in security. The role title that contains both a domain word and an engineering word — Legal Engineer, Compliance Engineer, Applied AI Architect — is the marker of a role that has been redefined around method extraction.

The third is that the companies that pull ahead, both as AI vendors and as AI adopters, are the ones that treat method as a first-class artefact. They write playbooks. They build evaluation suites. They put domain experts on engineering org charts. They invest in tooling that turns implicit knowledge into explicit specification. The places that will struggle are the places where method lives only in people's heads and where the gap between "we have AI" and "AI does the work" is therefore unbridgeable — not for any reason to do with models, but because there is nothing for the model to be applied to.

The fourth is that the shape of the method gap is the shape of the AI deployment curve. Where method has already been codified — software, structured data, design systems — agent products will arrive faster and become more reliable. Where method remains tacit — most of healthcare, most of physical operations, most of senior judgment work — agents will be slower, more uneven, and more dependent on the deployment muscle. The order in which industries get transformed by AI will be largely predictable from how much of their method is already written down.

Leavitt's framework was about interdependence. He argued that no one element of the diamond could be moved without disturbing the others — and was careful, even in 1965, to leave room for actors who weren't people. The framework's value was its insistence on that mutual constraint, and its quiet anticipation that the cast would change.

When the actor inside the system is a model, the four elements collapse into a tight circle. People codify the method. The method is encoded in the technology. The technology performs the task. The task reshapes the people. And around again.

The companies that are winning the AI era are the ones building this loop deliberately, with the method extracted and made explicit at every turn. The deployment teams, the consulting JVs, the Legal Engineers, the Tutors, the context-engineering literature — they are all symptoms of the same realisation. AI is not plug-and-play. The model is not the bottleneck. The method is.

The hiring is just the first place that realisation becomes visible. The harder work — naming the methods, codifying them, deciding what to augment with tooling and what to leave to humans, building the evaluations that tell you whether any of it is working — is what every organisation now serious about AI is going to spend the next several years on. The method layer is not a metaphor. It is a real layer, with structure, that can be designed and invested in. The companies that build it well will get the value the model alone was never going to deliver.